1 | !wget --no-check-certificate \ |

--2019-05-23 08:11:52-- https://storage.googleapis.com/laurencemoroney-blog.appspot.com/horse-or-human.zip

Resolving storage.googleapis.com (storage.googleapis.com)... 108.177.125.128, 2404:6800:4008:c06::80

Connecting to storage.googleapis.com (storage.googleapis.com)|108.177.125.128|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 149574867 (143M) [application/zip]

Saving to: ‘/tmp/horse-or-human.zip’

/tmp/horse-or-human 100%[===================>] 142.65M 81.4MB/s in 1.8s

2019-05-23 08:11:54 (81.4 MB/s) - ‘/tmp/horse-or-human.zip’ saved [149574867/149574867]1 | !wget --no-check-certificate \ |

--2019-05-23 08:13:02-- https://storage.googleapis.com/laurencemoroney-blog.appspot.com/validation-horse-or-human.zip

Resolving storage.googleapis.com (storage.googleapis.com)... 108.177.125.128, 2404:6800:4008:c06::80

Connecting to storage.googleapis.com (storage.googleapis.com)|108.177.125.128|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 11480187 (11M) [application/zip]

Saving to: ‘/tmp/validation-horse-or-human.zip’

/tmp/validation-hor 100%[===================>] 10.95M --.-KB/s in 0.1s

2019-05-23 08:13:03 (96.6 MB/s) - ‘/tmp/validation-horse-or-human.zip’ saved [11480187/11480187]The following python code will use the OS library to use Operating System libraries, giving you access to the file system, and the zipfile library allowing you to unzip the data.

1 | import os |

The contents of the .zip are extracted to the base directory /tmp/horse-or-human, which in turn each contain horses and humans subdirectories.

In short: The training set is the data that is used to tell the neural network model that 'this is what a horse looks like', 'this is what a human looks like' etc.

One thing to pay attention to in this sample: We do not explicitly label the images as horses or humans. If you remember with the handwriting example earlier, we had labelled 'this is a 1', 'this is a 7' etc. Later you'll see something called an ImageGenerator being used -- and this is coded to read images from subdirectories, and automatically label them from the name of that subdirectory. So, for example, you will have a 'training' directory containing a 'horses' directory and a 'humans' one. ImageGenerator will label the images appropriately for you, reducing a coding step.

Let's define each of these directories:

1 | # Directory with our training horse pictures |

Now, let's see what the filenames look like in the horses and humans training directories:

1 | train_horse_names = os.listdir(train_horse_dir) |

['horse29-9.png', 'horse15-9.png', 'horse05-0.png', 'horse30-0.png', 'horse35-4.png', 'horse02-3.png', 'horse31-8.png', 'horse09-5.png', 'horse03-9.png', 'horse37-2.png']

['human13-16.png', 'human08-24.png', 'human14-00.png', 'human16-16.png', 'human06-27.png', 'human04-17.png', 'human03-18.png', 'human16-00.png', 'human16-17.png', 'human09-24.png']

['horse1-276.png', 'horse4-530.png', 'horse2-040.png', 'horse2-183.png', 'horse2-201.png', 'horse1-554.png', 'horse6-218.png', 'horse3-011.png', 'horse6-004.png', 'horse3-326.png']

['valhuman02-13.png', 'valhuman01-23.png', 'valhuman03-24.png', 'valhuman01-07.png', 'valhuman02-14.png', 'valhuman05-08.png', 'valhuman03-12.png', 'valhuman05-27.png', 'valhuman04-10.png', 'valhuman05-11.png']Let's find out the total number of horse and human images in the directories:

1 | print('total training horse images:', len(os.listdir(train_horse_dir))) |

total training horse images: 500

total training human images: 527

total validation horse images: 128

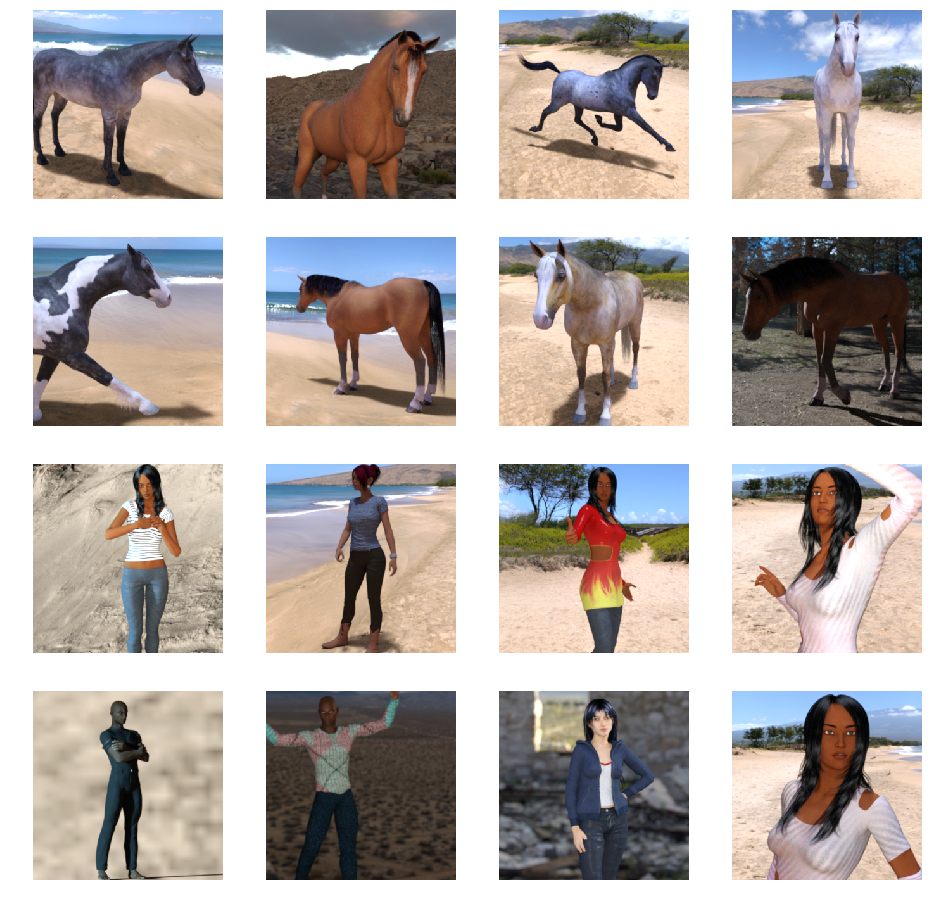

total validation human images: 128Now let's take a look at a few pictures to get a better sense of what they look like. First, configure the matplot parameters:

1 | %matplotlib inline |

Now, display a batch of 8 horse and 8 human pictures. You can rerun the cell to see a fresh batch each time:

1 | # Set up matplotlib fig, and size it to fit 4x4 pics |

Building a Small Model from Scratch

But before we continue, let's start defining the model:

Step 1 will be to import tensorflow.

1 | import tensorflow as tf |

We then add convolutional layers as in the previous example, and flatten the final result to feed into the densely connected layers.

Finally we add the densely connected layers.

Note that because we are facing a two-class classification problem, i.e. a binary classification problem, we will end our network with a sigmoid activation, so that the output of our network will be a single scalar between 0 and 1, encoding the probability that the current image is class 1 (as opposed to class 0).

1 | model = tf.keras.models.Sequential([ |

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/resource_variable_ops.py:435: colocate_with (from tensorflow.python.framework.ops) is deprecated and will be removed in a future version.

Instructions for updating:

Colocations handled automatically by placer.The model.summary() method call prints a summary of the NN

1 | model.summary() |

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d (Conv2D) (None, 298, 298, 16) 448

_________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 149, 149, 16) 0

_________________________________________________________________

conv2d_1 (Conv2D) (None, 147, 147, 32) 4640

_________________________________________________________________

max_pooling2d_1 (MaxPooling2 (None, 73, 73, 32) 0

_________________________________________________________________

conv2d_2 (Conv2D) (None, 71, 71, 64) 18496

_________________________________________________________________

max_pooling2d_2 (MaxPooling2 (None, 35, 35, 64) 0

_________________________________________________________________

conv2d_3 (Conv2D) (None, 33, 33, 64) 36928

_________________________________________________________________

max_pooling2d_3 (MaxPooling2 (None, 16, 16, 64) 0

_________________________________________________________________

conv2d_4 (Conv2D) (None, 14, 14, 64) 36928

_________________________________________________________________

max_pooling2d_4 (MaxPooling2 (None, 7, 7, 64) 0

_________________________________________________________________

flatten (Flatten) (None, 3136) 0

_________________________________________________________________

dense (Dense) (None, 512) 1606144

_________________________________________________________________

dense_1 (Dense) (None, 1) 513

=================================================================

Total params: 1,704,097

Trainable params: 1,704,097

Non-trainable params: 0

_________________________________________________________________The "output shape" column shows how the size of your feature map evolves in each successive layer. The convolution layers reduce the size of the feature maps by a bit due to padding, and each pooling layer halves the dimensions.

Next, we'll configure the specifications for model training. We will train our model with the binary_crossentropy loss, because it's a binary classification problem and our final activation is a sigmoid. (For a refresher on loss metrics, see the Machine Learning Crash Course.) We will use the rmsprop optimizer with a learning rate of 0.001. During training, we will want to monitor classification accuracy.

NOTE: In this case, using the RMSprop optimization algorithm is preferable to stochastic gradient descent (SGD), because RMSprop automates learning-rate tuning for us. (Other optimizers, such as Adam and Adagrad, also automatically adapt the learning rate during training, and would work equally well here.)

1 | from tensorflow.keras.optimizers import RMSprop |

Data Preprocessing

Let's set up data generators that will read pictures in our source folders, convert them to float32 tensors, and feed them (with their labels) to our network. We'll have one generator for the training images and one for the validation images. Our generators will yield batches of images of size 300x300 and their labels (binary).

As you may already know, data that goes into neural networks should usually be normalized in some way to make it more amenable to processing by the network. (It is uncommon to feed raw pixels into a convnet.) In our case, we will preprocess our images by normalizing the pixel values to be in the [0, 1] range (originally all values are in the [0, 255] range).

In Keras this can be done via the keras.preprocessing.image.ImageDataGenerator class using the rescale parameter. This ImageDataGenerator class allows you to instantiate generators of augmented image batches (and their labels) via .flow(data, labels) or .flow_from_directory(directory). These generators can then be used with the Keras model methods that accept data generators as inputs: fit_generator, evaluate_generator, and predict_generator.

1 | from tensorflow.keras.preprocessing.image import ImageDataGenerator |

Found 1027 images belonging to 2 classes.

Found 256 images belonging to 2 classes.Training

Let's train for 15 epochs -- this may take a few minutes to run.

Do note the values per epoch.

The Loss and Accuracy are a great indication of progress of training. It's making a guess as to the classification of the training data, and then measuring it against the known label, calculating the result. Accuracy is the portion of correct guesses.

1 | history = model.fit_generator( |

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/math_ops.py:3066: to_int32 (from tensorflow.python.ops.math_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.cast instead.

Epoch 1/15

8/8 [==============================] - 2s 216ms/step - loss: 0.6814 - acc: 0.5000

9/9 [==============================] - 11s 1s/step - loss: 0.8579 - acc: 0.5268 - val_loss: 0.6814 - val_acc: 0.5000

Epoch 2/15

8/8 [==============================] - 2s 206ms/step - loss: 0.8800 - acc: 0.5000

9/9 [==============================] - 9s 955ms/step - loss: 0.6284 - acc: 0.5881 - val_loss: 0.8800 - val_acc: 0.5000

Epoch 3/15

8/8 [==============================] - 2s 207ms/step - loss: 0.6334 - acc: 0.5781

9/9 [==============================] - 8s 940ms/step - loss: 0.6655 - acc: 0.6602 - val_loss: 0.6334 - val_acc: 0.5781

Epoch 4/15

8/8 [==============================] - 2s 207ms/step - loss: 0.4119 - acc: 0.8711

9/9 [==============================] - 8s 938ms/step - loss: 0.5446 - acc: 0.7790 - val_loss: 0.4119 - val_acc: 0.8711

Epoch 5/15

8/8 [==============================] - 2s 206ms/step - loss: 1.3072 - acc: 0.8125

9/9 [==============================] - 9s 956ms/step - loss: 0.4118 - acc: 0.8189 - val_loss: 1.3072 - val_acc: 0.8125

Epoch 6/15

8/8 [==============================] - 2s 205ms/step - loss: 2.0815 - acc: 0.7852

9/9 [==============================] - 9s 960ms/step - loss: 0.1871 - acc: 0.9309 - val_loss: 2.0815 - val_acc: 0.7852

Epoch 7/15

8/8 [==============================] - 2s 208ms/step - loss: 1.7291 - acc: 0.7539

9/9 [==============================] - 9s 1s/step - loss: 0.6104 - acc: 0.8384 - val_loss: 1.7291 - val_acc: 0.7539

Epoch 8/15

8/8 [==============================] - 2s 211ms/step - loss: 1.3320 - acc: 0.8242

9/9 [==============================] - 9s 956ms/step - loss: 0.1349 - acc: 0.9406 - val_loss: 1.3320 - val_acc: 0.8242

Epoch 9/15

8/8 [==============================] - 2s 205ms/step - loss: 1.7191 - acc: 0.7969

9/9 [==============================] - 8s 942ms/step - loss: 0.1120 - acc: 0.9581 - val_loss: 1.7191 - val_acc: 0.7969

Epoch 10/15

8/8 [==============================] - 2s 201ms/step - loss: 0.3554 - acc: 0.9062

9/9 [==============================] - 8s 938ms/step - loss: 0.0666 - acc: 0.9718 - val_loss: 0.3554 - val_acc: 0.9062

Epoch 11/15

8/8 [==============================] - 2s 205ms/step - loss: 1.1811 - acc: 0.8398

9/9 [==============================] - 8s 941ms/step - loss: 0.1556 - acc: 0.9669 - val_loss: 1.1811 - val_acc: 0.8398

Epoch 12/15

8/8 [==============================] - 2s 204ms/step - loss: 1.2945 - acc: 0.8398

9/9 [==============================] - 8s 938ms/step - loss: 0.0237 - acc: 0.9932 - val_loss: 1.2945 - val_acc: 0.8398

Epoch 13/15

8/8 [==============================] - 2s 203ms/step - loss: 1.0270 - acc: 0.8945

9/9 [==============================] - 9s 969ms/step - loss: 0.0034 - acc: 1.0000 - val_loss: 1.0270 - val_acc: 0.8945

Epoch 14/15

8/8 [==============================] - 2s 203ms/step - loss: 0.8244 - acc: 0.7773

9/9 [==============================] - 8s 940ms/step - loss: 0.5428 - acc: 0.9007 - val_loss: 0.8244 - val_acc: 0.7773

Epoch 15/15

8/8 [==============================] - 2s 207ms/step - loss: 1.0451 - acc: 0.8633

9/9 [==============================] - 9s 946ms/step - loss: 0.0516 - acc: 0.9873 - val_loss: 1.0451 - val_acc: 0.8633###Running the Model

Let's now take a look at actually running a prediction using the model. This code will allow you to choose 1 or more files from your file system, it will then upload them, and run them through the model, giving an indication of whether the object is a horse or a human.

1 | import numpy as np |

Saving zhenjianzhao.jpg to zhenjianzhao (3).jpg

[0.]

zhenjianzhao.jpg is a horseVisualizing Intermediate Representations

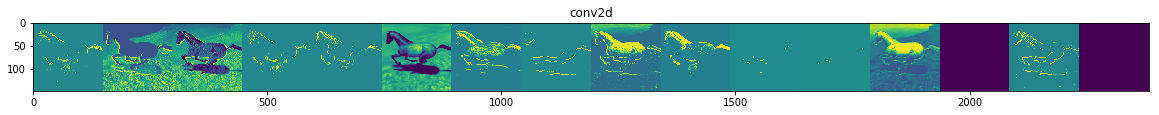

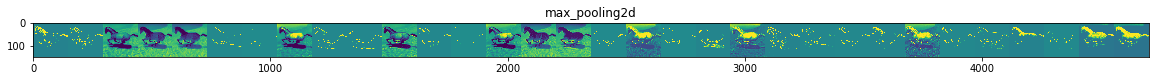

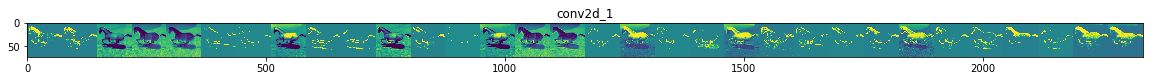

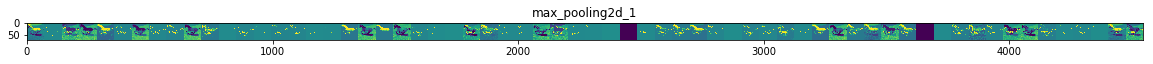

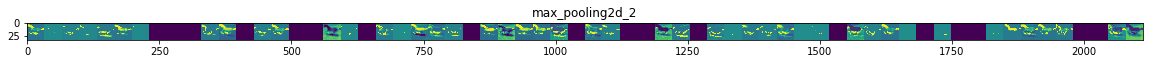

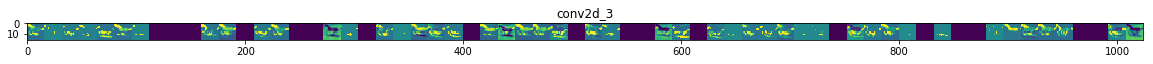

To get a feel for what kind of features our convnet has learned, one fun thing to do is to visualize how an input gets transformed as it goes through the convnet.

Let's pick a random image from the training set, and then generate a figure where each row is the output of a layer, and each image in the row is a specific filter in that output feature map. Rerun this cell to generate intermediate representations for a variety of training images.

1 | import numpy as np |

/usr/local/lib/python3.6/dist-packages/ipykernel_launcher.py:43: RuntimeWarning: invalid value encountered in true_divide

As you can see we go from the raw pixels of the images to increasingly abstract and compact representations. The representations downstream start highlighting what the network pays attention to, and they show fewer and fewer features being "activated"; most are set to zero. This is called "sparsity." Representation sparsity is a key feature of deep learning.

These representations carry increasingly less information about the original pixels of the image, but increasingly refined information about the class of the image. You can think of a convnet (or a deep network in general) as an information distillation pipeline.

Clean Up

Before running the next exercise, run the following cell to terminate the kernel and free memory resources:

1 | import os, signal |